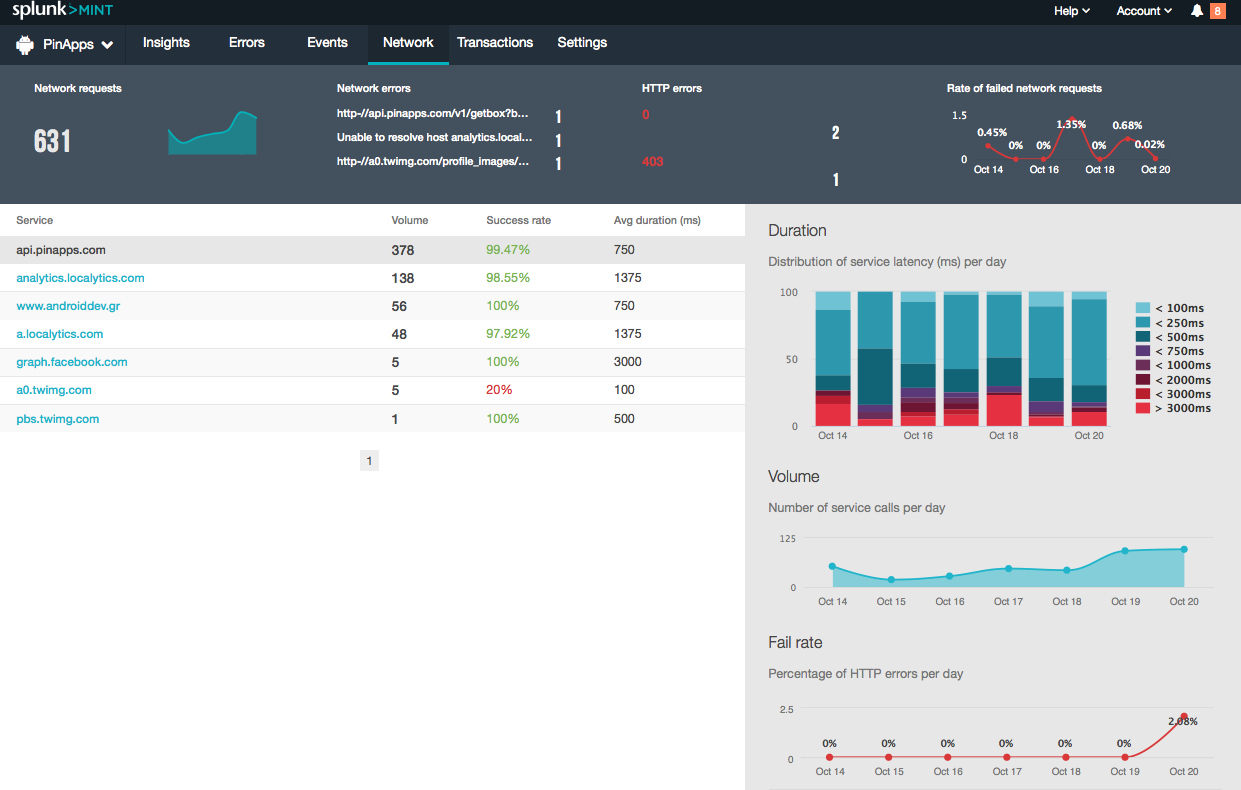

Distributed tracing is used by IT and DevOps teams to track requests or transactions through the application they are monitoring - gaining vital end-to-end observability into that journey. In summary, this approach can be valuable in troubleshooting complex deployments.Distributed tracing, also known as distributed request tracing, is a method of monitoring and observing service requests in applications built on a microservices architecture. You can also pipe the results to a Splunk report command such as top. If you are already using Splunk for central log management in environments that are typical to this sample SOA flow, then out of the box, you will have this capability to trace your SOA applications to gain better visibility at the individual user level for events that have occurred. What I’ve done is use the same example in the business context for troubleshooting SOA applications. Rather than go into the details for how transaction search works and the possible ways to use the above example, I invite you to read Eric “Maverick” Garner’s excellent blog entry discussing the steps in very readable language. Each grouping will also give you a duration time so that you know how long an end to end flow took. This search command will return groupings for all users with a session and user ID in a correlated manner, which follows the flow of the SOA. With these requirements, we could use a transaction search command to correlate all users for a certain time span within one search: eventtype="SOA_Logs" | transaction fields="session_id,use_id” connected=f maxspan=5m maxpause=5m You would use Splunk’s field extraction capability to extract these fields from your logs at search time. Also, the web server log file may at first have a session ID for the authenticated user, the application server may map this to an user ID and the rest of the nodes in the flow may use this user ID to identify the same user. For purposes of example, I am assuming that you already have an eventtype created called “SOA_Logs”, which is just a search that includes all the different sourcetypes for SOA log files. To make the situation more complex, what if you wanted to now trace the flow of all users at a certain point in time and correlate what each user’s session was doing on each node of the SOA flow? Splunk’s transaction search can be utilized in the Splunk Web application to do this rather easily. At this point, the user would have access to log events without having to log onto any production servers. Along comes Splunk to index all the log files using forwarders to send events to a central indexer.

The complexity begins as soon as something goes wrong in the flow as each node in the SOA may represent a cluster and there may be multiple log files being generated to record what has occurred. The diagram below illustrates this basic flow. The application server then sends a message to an Enterprise Service Bus (ESB), which in turn, routes the message to a Business Process Management (BPM) solution. One flow may include a web server, which initiates the request and sends a message to an application server. In a typical SOA deployment, you may have a situation where a user logs into a web site for procurement or purchasing, which kicks off a series of steps handled by different servers using heterogeneous technologies. In this post, I will discuss a more common issue that pertains to log management, operations support, and troubleshooting. I have mentioned in past blog entries that Splunk can be used to contribute to the governance and indexing of Service Oriented Architectures.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed